Is AI Trusted Enough for Regulated Industries?

Amid all of the mudslinging between the Department of Defense and Anthropic this week, you may be asking yourself, “Where is this heading?” The high-pitched headlines make it sound like this is (yet another) AI inflection point, and yet for most consumers and businesses, there’s a lot more smoke than fire, at least in the near term.

While this dustup itself is about philosophical conflict between two entities, it does hint at a core tension in AI usage: trust vs. progress. How safe does AI need to be? How fast do we need to move?

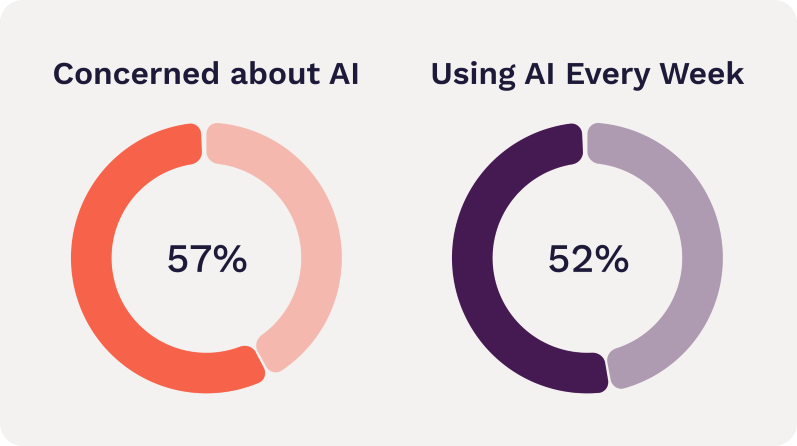

The truth is, we don’t know how this will play out yet. We’re writing the fire code before the first fire has started. But consumers are nonetheless wary. The statistics confirm that 55-60% are concerned about AI usage, and about the same percentage are users of generative AI (about 99% of US adults are users of AI in some form, whether that’s navigation or search assistance, though most don’t identify this consciously as AI).

That means there’s at least some overlap between those who use AI, and those who are concerned about its safety. And that makes sense; anyone who’s used AI has probably also seen it misunderstand an instruction or hallucinate.

The numbers rise to 70-80% of Americans who are concerned about safety when the topic is narrowed to using AI for high-stakes interactions, like military decisions, medical decisions or even the risks of involving AI in politics. People recognize that when the stakes are higher, the impact of hallucinations grows. No one wants their brain surgery to be the one where the AI made a mistake.

There are all sorts of arguments on both sides here, and we as a society have explored them already—remember the self-driving car debate from a decade ago? That brought up many of the same concerns. What happens when machines make mistakes? Who’s liable? What error rate can we live with?

That debate shone a light on a truth that’s still relevant today: even though humans mess up even more frequently than machines, we’re still more comfortable with human error than machine error. Human error is normal, if unfortunate when it happens to you. Machine error feels tragic and horrifying. A person who runs a red light and injures someone is part of a regrettably expected error rate. A self-driving car that runs a red light and injures someone is a menace to our societal expectation of safety. Fears around AI are no different.

There’s a kernel of logic to this reaction. Humans tend to make one mistake at a time. Machines, with their dazzling efficiency, make mistakes more rarely but when they do, they can make a lot of them in a hurry. Ever accidentally replied all with a message meant for one person? That’s the kind of amplification of mistakes that machines excel at.

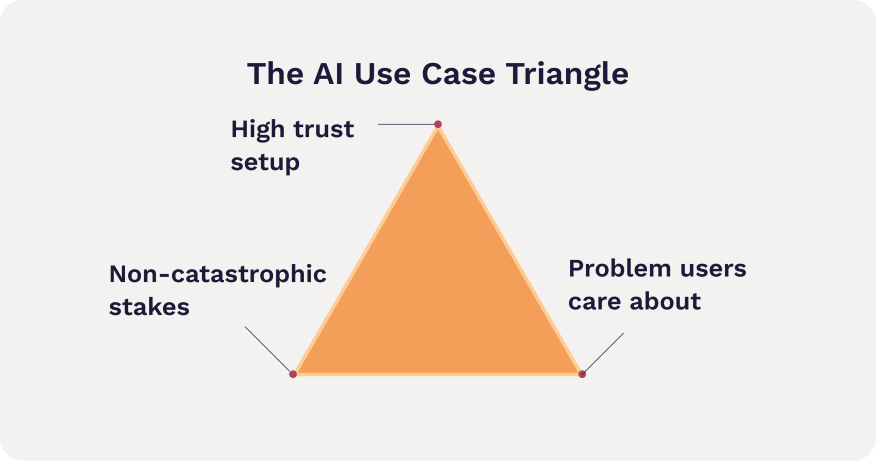

So how do users feel about AI in highly regulated industries? There’s a specific triangle where AI use cases make a lot of sense.

High trust setup

Problem that’s annoying enough to care about

Not catastrophic stakes

Let’s take this one at a time.

High Trust Setup

Trust is built in layers. The first is the trust with the company. If your brand has built significant goodwill with your audience, that’s a great start, but it’s not the end of the journey.

The setup for the interaction must also create trust. That means the software must look and feel credible—it doesn’t have to be groundbreaking, but should meet a 75th percentile experience in the first few interactions. That means a nice onboarding flow, a good dashboard (even for a first-time user) and consistent, branded communications such as emails.

The setup also needs to handle the basics right. While consumers don’t love jumping through hoops, they do have a good sense of what security ought to be in place. Don’t ask for information you don’t need, and do put guardrails in place that are appropriate for the stakes. For example, MFA and a smooth login experience for regulated industries.

The last step is to describe the AI feature in a clear, trustworthy way. Consumers can smell excessive hype a mile away. Confine your promises to what you know it can do very well. Don’t say “UmbrellaAI can solve all your insurance challenges.” Instead, try something like “I’m UmbrellaAI. I can help with getting a new quote, or finding your policy documents.”

Relevant problem that’s annoying enough to care about

The problem must be something that the user actually cares about to get them to willingly interact with AI. While an increasing number of users have worked with an LLM (Large Language Model) like ChatGPT in the last week, interaction still hovers around half of the adult population. Of that half, some users will have most recently had a good experience, while others are coming straight off of an AI fail. If you’ve just had an interaction with AI that made you roll your eyes and tell your coworkers “Our jobs aren’t going anywhere,” you’re probably not going to be first in line to try the new AI chatbot in your financial advisor’s app. And then there are the people who haven’t tried yet because they are inherently suspicious of AI.

For all of these reasons, it’s a reasonable assumption that half or more of your audience will need to be coaxed into trying AI. They need a reason, and saving the company money on customer service is not going to sway them.

Therefore, an organization looking to get consumers to directly interact with AI must find something the consumer actually cares about. For example, using AI to check a user’s expenses and recommend tax deductions they may be eligible for is compelling—everyone likes to save money. But what about the trust factor?

You’d have to ask consumers to give you some pretty personal information: access to their bank transactions. If you’re their bank, or a personal finance app, that might feel reasonable. It wouldn’t be a reasonable trust bar for a fitness tracker app. So, we need a problem that’s annoying enough to care about and within the subject matter that the trust is based on.

Non-catastrophic stakes

As alluded to in the opening, consumers have an understandable skepticism of AI for life-or-death stakes. No one wants AI making their medical decisions, despite the fact that some users will happily converse with their favorite LLM about their test results. It’s complicated and more than a little contradictory. But one way or another, while conversation is one thing, we still want to believe there’s a trusted, qualified human making the most important decisions.

But it can’t be completely unimportant either, or no one will care. So how do we find the appropriate stakes? One way is with unobtrusive features that have a built-in backup plan.

AI-assisted search is one example. AI can suggest some results or even a summary answer, and you can still use traditional search to provide more results. This puts AI in the role of enhancing, not limiting.

AI summaries are another great example. Summarizing a long document (as Copilot and Gemini now do automatically) is perceived by users to be low-stakes, because they still have the full document available if they want to check something.

Another way to make the stakes lower is to use AI to assist a human operator. AI can flag high-risk records, or summarize loan applications and highlight unusual values. A person still makes the final call. That AI isn’t directly customer-facing so it doesn’t do as much for perception of innovation as consumer-facing AI (unless your organization makes an effort to talk about it publicly). Yet it can make a big difference in efficiency and speed, which does impact consumers.

Think of the difference of knowing that AI flagged for your surgeon things to watch for during surgery based on your brain scan, vs. knowing that AI decided to operate and told the surgical robot where to cut.

Knowing when and where to deploy AI

AI in regulated industries doesn’t have to be perfect, but it does have to be correctly chosen based on the level of trust, the user interest, and the stakes. Regulated industries are well-positioned to lead with a healthy balance of safety and innovation, because they’ve been walking this line for decades. AI doesn’t change the rules, it just makes the cost of ignoring them a little harder to hide.

Success in regulated industries is about knowing your users well enough to meet them where they are — and being honest enough about your AI to let it prove itself, one meaningful win at a time.